From time to time, I'll attend industry events where people discuss the future of CAD. The presentations are always heavy with technological jargon – acronyms like ML, VR, IoT, AI, DFMA, AR, and word pairs like generative design, digital twin, 3d printing, big data. We often seem to assume that the future of CAD is tied to the future of technology – if we can only make sense of where technology is going, if we can only decipher the jargon, we'll know where CAD is headed. Yet as I sit through these industry events, I'm not sure that's always true.

In some ways, it's no surprise that people interested in CAD are interested in technology. If you're the type of nerd that goes to CAD conferences (like me), this is exciting stuff. And it's not like it's irrelevant. Among that bingo card of acronyms and word pairs, there are undoubtedly concepts that will redefine the industry. But at the same time, I think we often limit ourselves by looking at the future of CAD exclusively through the lens of technology.

When I look at CAD today, the most exciting trends have nothing to do with technology. In this article, I want to focus on two of them: the increasing use of sophisticated computational design tools on regular, mundane architecture; and the rise of CAD software that addresses narrow verticals in the market.

Against a backdrop of fast-approaching technological breakthroughs, these two trends may seem a little boring, perhaps a little too prosaic. But I think they're interesting because they represent a major shift in how we use computers in the design process, albeit a shift that you don't really hear discussed when people talk about the future of CAD.

Fat Middle over Long Tail

New York isn't all Empire State Buildings and Brooklyn Bridges. Brownstones are a repetitive typology found throughout the city – Goodmanphoto via stock.adobe.com

When I moved to New York, I was surprised to discover that most buildings in the city, a city famous for its design, fashion, and architecture, were actually pretty ordinary. I mean, don't get me wrong, there are some fantastic buildings in New York. But if you're walking down a random street, you're probably not going to pass them. Most of the buildings are fairly repetitive. They follow a standard formula the same way houses in the suburbs follow a formula, the same way shopping malls, airports, and office buildings follow a formula.

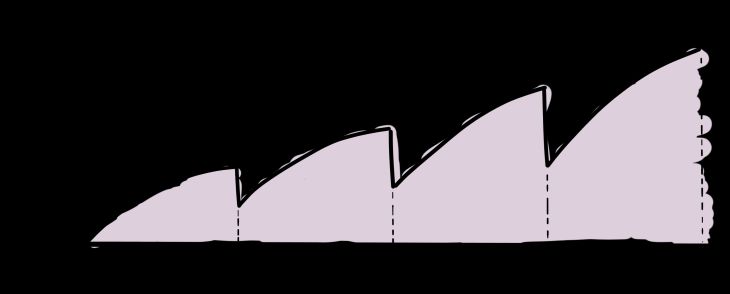

Despite what you see in the magazines (or Instagram), most of the built environment consists of unexceptional buildings. That is to say, if you were to gather every building in the world and organize them from the most unoriginal to the most unique, you'd have a lot of buildings down the 'unoriginal' end (mountains of McMansions, gas stations, and apartment buildings). But you'd have only a handful of projects down the 'unique' end (things like Frank Gehry's Guggenheim Bilbao and Antoni Gaudí's Sagrada Família). Of course, the unique buildings garner the most attention, occupying an outsized place in the media and our minds. But they're not the most common. In reality, architecture follows a long-tail distribution – there are tons of unoriginal buildings and just a few truly unique ones.

Architecture follows a long-tail distribution – the majority of projects are relatively unoriginal while just a few are truly unique. Designers and the media focus a lot on the long tail, yet most of the built environment isn't in this unique end of the spectrum.

In the early 2000s, around the time Chris Anderson popularized the term 'long tail' in a book and article of the same name, the frontier of CAD was the unique end of the distribution. At that time, most CAD software could handle regular, orthogonal projects. But if you were to throw in a slanted wall or a doubly curved surface, the programs would inevitably fail. To get around these limitations, designers needed to get creative. Only elite architects, like Frank Gehry, could pull off these technological feats. Everyone was aware of how improbable these projects were. Some jumped at the challenge, sparking a game of one-upmanship where designers flaunted their technical dexterity through evermore tricky designs. This launched a strange period of architecture where the criteria for good design were generally: the harder a building was to model in CAD, the harder it was to construct, the more unique it was, the better.

During this time, many of the industry's best and brightest worked to push the boundaries of what was computationally possible, helping realize buildings that were right at the end of the long tail. I graduated architecture school around this period, and while I wouldn't include myself in the best and brightest, I was definitely swept away by them. All around me, people were pouring their efforts into parametric models and optimization techniques that didn't necessarily enable better buildings, just more difficult ones. Parametric tools like Generative Components and Grasshopper become popular, not because of how well they did the regular stuff, the vertical walls present in 99% of projects, but rather their ability to do the unique stuff. CAD companies took note. They released updates that allowed their software to stretch further and further down the uniqueness spectrum.

Designers weren't pushing the bounds of uniqueness just for the sport of it. Underlying these efforts was a feeling that architecture was limited by its design tools. By pushing against these constraints, we all implicitly hoped that it would expand the design vocabulary and open new avenues of expression. Presumably, the technical impediments would wear away with time, and it would become easier and easier to create similar designs. Patrik Schumacher predicted that soon it would become viable for every building to be unique. Rather than producing mountains of unoriginal projects, we would have a new style of architecture – parametricism – where every project was curved, irregular, and different. Schumacher essentially predicted that the long tail would flip.

Of course, this didn't happen. The search for evermore unique designs eventually reached a point of diminishing returns – at a certain point, uniqueness became repetitive. Like a magic trick that was used one too many times, these projects were no longer surprising or unexpected. And without the novelty, there wasn't much worth celebrating. It's not as if the projects produced during this period were any more affordable, abundant, sustainable, or pleasant to inhabit.

The fat middle is where the opportunity lies for computational design. These projects aren't repetitive, but they follow a formula. Rather than using computational design to make a couple of extraordinary buildings, designers are increasingly using these tools to make lots of mundane buildings better.

At the present moment, there seems to be a waning enthusiasm for architecture that pushes the boundaries of the long tail. In its place, people appear to be shifting their focus to the middle of the uniqueness spectrum.

For example, consider the recent crop of site feasibility software from companies such as Testfit, Archistar, and Spacemaker. These companies all do much the same thing – they make software that allows you to select a site and quickly generate a massing model and a proforma. It sounds simple enough, but software that automatically generates a building is cutting-edge stuff. A decade ago, you would only find this level of computational prowess on big, expensive projects by famous architects. But here, it's being applied to ordinary apartment buildings, car parks, and hotels. These are projects right in the middle of the uniqueness spectrum – each project is a little different, generally due to site constraints, but they never stray too far from a template of fairly conventional project typologies. And that's fine.

I saw a similar thing at WeWork a couple of years ago. Back then, WeWork had an incredibly sophisticated method for delivering projects. Technologically, it was far beyond anything I'd seen elsewhere in the industry. But when you walked into one of WeWork's coworking spaces, it just looked like a regular office with nice chairs and fancy couches. There was nothing about it that screamed: this is the absolute pinnacle of what's possible in CAD right now; this is the product of advanced computational algorithms, laser scanning, and a polished pipeline of BIM data; this is what billions of dollars in technology buys you. Instead of using computation to make a unique office, the technology was being put to work in other ways, making these reasonably regular offices more efficient and more pleasant to inhabit.

This shift to the middle is interesting because you're seeing so much computational expertise being brought to bear on such ordinary projects. I know that calling these projects 'ordinary' sounds like a critique, particularly if, like me, you come from a generation where you were rewarded for difficult and unusual projects. But I think there is dignity in the ordinary. Most of the built environment isn't landmark projects with big budgets. It's standard, inconspicuous projects that you don't think twice about. This is where the opportunity lies – the fat middle of architecture1. These projects are incredibly challenging because there are so many financial constraints (you can't afford to get too wild) and practical constraints (you can't duplicate past work and expect it to work on a confined site). It's a perfect problem for computational design. More and more, I think you're going to see CAD turn its attention to this segment of architecture, shying away from the extraordinary in favor of making mundane buildings better.

Verticals over horizontals

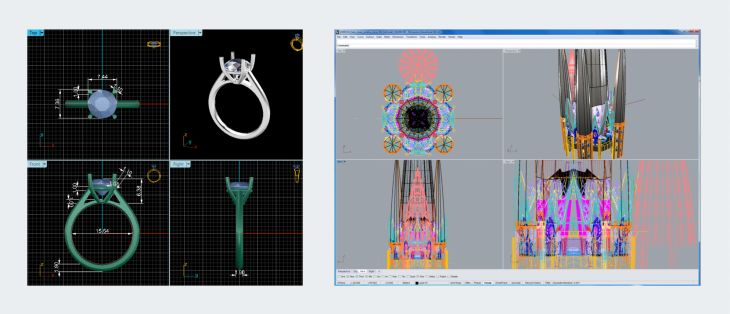

Left: Rhino screenshot of the engagement ring. Right: Rhino screenshot of the Sagrada Família.

A couple of months ago I got engaged2. Obviously, this is a big story, so in the interest of time, I'll tell you one tiny, unexpected detail: the jeweler used Rhino. Admittedly, this isn't part of the engagement story that I usually tell friends and family, but since you're here to read about the future of CAD, the jeweler using Rhino is a pretty big deal.

I'm a massive fan of Rhino. I used it for many years when I was working as part of the team completing the design of Antoni Gaudí's Sagrada Família. I liked using it on architectural projects, but I expected a jeweler would prefer using specialized, ring-designing software. So when they emailed a mockup of the ring, I was surprised to see that they'd sent through a screenshot from Rhino. It seemed weird that this tool I'd used to model a massive church would be the same software they'd use to model the fine details of a ring. I mean, sure, they're both momentous objects, but they're just so different.

Most CAD software addresses a horizontal market. You could use it to design everything from a single-family house to an urban master-plan.

Most CAD software addresses a horizontal market. That is to say, the software serves a range of industries rather than targeting customers in a particular segment. Rhino is the perfect example. Their software isn't exclusively for jewelers, nor is it solely for architects. Instead, they make CAD software that works in virtually any market segment, from engagement rings to 19th-century churches and beyond. Even software like Revit, which focuses on a single vertical (architecture), takes a reasonably expansive approach, developing software that can be used to design everything from a house to a skyscraper.

It's easy to see why CAD companies take this horizontal approach. If you've built a tool that can draw four walls and a roof, it makes sense to sell that functionality to everyone that could possibly want it, even if they come from seemingly disparate segments of an industry. But there are downsides to this horizontal approach, particularly regarding the specificity of features and data exchange.

Specificity

The challenge with developing CAD software for a horizontal market is that it has to generalize across a range of use cases. Just consider the differences between designing a house and a skyscraper. A house might be designed by a sole practitioner who only needs to create a basic set of plans for a builder. On the other hand, a skyscraper may involve a vast team of specialists working collaboratively on everything from fire suppression systems to facade elements. These two project typologies couldn't be further apart, yet we expect Revit to accommodate them both, and then some.

Because Revit stretches across so many different topologies, it struggles with the specifics of each of them. For example, people using Revit to design apartment buildings have complained for over a decade that Revit can't count the number of units in a building. Sure, you can futz around with room schedules, write a Dynamo script, or buy a plugin to export and display this data, but all of these feel like workarounds. Software that specializes in designing apartments, such as TestFit, performs these calculations out-of-the-box. So why does Revit make it so complicated?

Many of us will blame Autodesk and say that they're not listening or that they're moving too slowly. But I'd like to posit an alternate explanation: Revit is horizontally integrated. In most cases, it's better for Autodesk to add a feature that benefits their entire user base rather than add a feature that only serves one vertical. So in the case of counting residential apartments, it's better for them to create generic tools like Dynamo3 than develop specific tools like an apartment counter. Obviously, if you're a residential architect, it feels like a lot of extra work for every firm to build their own unit counter in Dynamo, but this is by design – it's a natural byproduct of the horizontal model.

Data exchange

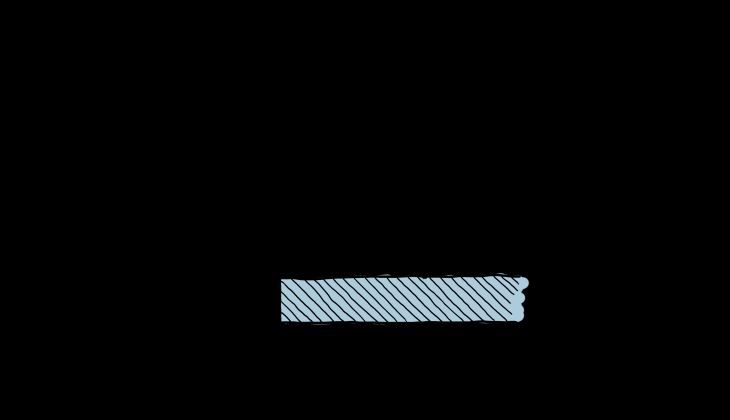

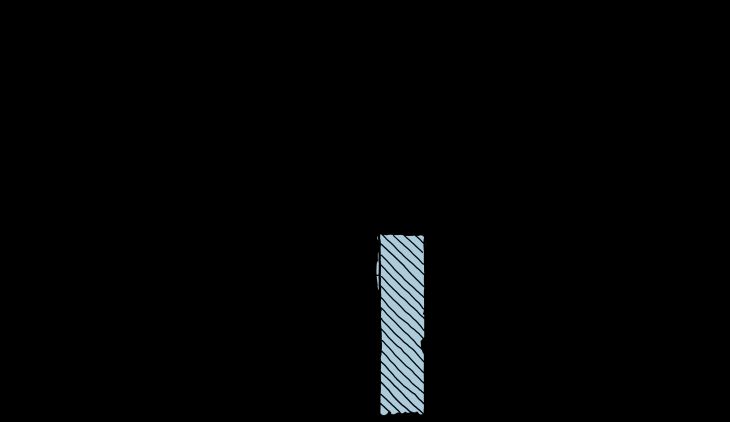

The sawtooth diagram, which shows how information is lost over the course of a project.

The horizontal model introduces another problem: the exchange of data from one software to the next. Throughout a project, data doesn't stay in one application. Instead, it passes from one software to the next. At these points of exchange, information is inevitably lost. This is perhaps best illustrated by Phil Bernstein's4 sawtooth diagram, showing how knowledge accumulates and disappears at points of exchange over the lifecycle of a project.

People have blamed this information loss on many things besides horizontal software. Perhaps it's the industry's adversarial nature hindering data exchange and cooperation. Or poor data standards and proprietary formats that make it hard to translate information from one software to the next. Or even a lack of technical knowledge from project leaders that don't know how to manage this process.

But let's be honest, this problem only exists because of horizontal integration. Each phase of the project has its own software. Designers have specialized design tools, contractors have tools for overseeing construction, and owners have software for managing properties. The transition between software is where information is lost. The sawtooth diagram effectively illustrates waves of information crashing into the interfaces between horizontal software – some of it gets through, but a lot doesn't.

Verticals

A number of startups are taking a different approach and going vertical, offering more specific design tools for a narrow niche.

Many of our frustrations with CAD software can be attributed to the horizontal market. Even seemingly intractable problems like data exchange and a lack of specific features seem to have their origins, at least partly, in the horizontal nature of most software. This is why it's interesting that some companies are beginning to take a different approach, focusing on verticals over horizontals.

Take, for example, Saltmine, a specialist tool for creating interior office spaces. Given Saltmine's narrow focus, they can offer specific tools for workplace projects, from strategy to test fits, layouts, and costing. They even touch on space management with tools to help survey end-users and visualize space utilization. But that's all Saltmine does. You can't use Saltmine to design a stadium, house, or restaurant. You can't even use it to create the exterior of an office building. All it does is help you plan, design, and realize interior office space.

One of Saltmine's main selling points is that it's an all-in-one solution. On their website, they emphasize how "data flows dynamically between each module" of their software. This isn't the type of data exchange envisioned by interoperability standards like IFC, and it definitely isn't the type of data exchange that's available between standard horizontal software. But because Saltmine focus's on a single vertical, they can move data around relatively seamlessly.

Saltmine isn't the only software in this vertical. In the last year or so Canoa, Haworth, and even Gensler have all developed software focused exclusively on workplace interiors. The same thing is happening in many other design verticals. So why the sudden shift away from horizontal software?

One explanation is that software has become easier to make. A decade ago, if you said that you were going to make your own web-based design tool, most people would think you're mad. Today, it has got to a point where a company like Haworth, a furniture manufacturer, can develop their own interior office software mainly as a marketing exercise. When software is cheap, there's less pressure to maximize your investment by expanding horizontally5.

Another explanation is that design firms are under increasing pressure to focus. Historically, architecture practices sold the same service to a broad range of industries. They might design a hospital for a healthcare provider, a stadium for a local government, and a house for a private individual. In many ways, they were horizontally orientated, so it made sense to sell software that matched this model (firms wanted a single piece of software that could do it all). But as the Future of the Professions explains, firms are under pressure to be more efficient and more specialized. One way of doing this is to go vertical and focus on just one market segment. The firms that narrow their focus no longer need software that can design hospitals, stadiums, and houses. Instead, they just want to serve their market segment, their vertical, really well. It makes sense that the software would follow.

It's still early days with many of these startups addressing narrow verticals, but it seems like a significant development. We're so accustomed to thinking of CAD software like Rhino, Revit, and AutoCAD as these broad, horizontal platforms. It's interesting to consider a marketplace where there are far more vertical options.

Lightning round

Before ending this article, I'd like to quickly cover three trends that don't quite warrant their own section but fit this pattern of CAD being influenced by things that lie outside the technology. So briefly:

- UI over AI: There's a lot of hype around the potential of AI and machine learning in architecture. While these algorithms show potential, at the moment they can only do modest design tasks (such as laying out desks in an office). For everything else, you need a human. Many people are currently focusing on the technology side of AI, but it's the human side, the UI, the way that people and machines work together, that will ultimately dictate the success of these tools. I expect the most significant breakthroughs in the coming years won't be better algorithms but better interfaces.

- Web over desktop: For ages we've been talking about how CAD belongs on the desktop due to its graphical nature. But let's face it, most designers aren't zooming around in 3d all day. Instead, they're looking at 2d plans and entering data into Revit (which is basically a fancy spreadsheet). Companies like Google have already shown that cloud infrastructure and web interfaces are perfect conduits of data. This isn't a new technology but rather a change in mindset. It's taken a while for people in the architecture industry to get to this stage, but you're finally seeing a new generation of CAD startups that are bucking the conventional desktop wisdom and taking a web-first approach.

- Made-to-measure over mass-manufactured: For some reason, we hold up the aeronautical and automotive industries as the future of construction. Even today, startups are touting their ability to build houses like we build cars. Or planes. Or rockets. It's a bad precedent because none of these things have the same site and client constraints as architecture. A better precedent is fashion, especially suits. There was a point in time when every suit was bespoke (you'd have to go to a tailor to get it made). Then came the industrial revolution and one-size-fits-all clothing. Recently, there's been a resurgence in made-to-measure clothing, which essentially follows a fairly standard pattern that is parametrically adjusted to fit the wearer's body. Some new companies, like Generate, seem to be going down a similar path, using technology to create parametrically adjusted made-to-measure buildings for given sites. From my perspective, it looks like a far more likely path to success than imposing one-size-fits-all mass manufacturing on the industry.

The boring future

So there you have it. I made it through an entire article on the future of CAD without mentioning AR, IoT, or generative design. You're welcome!

Compared to the wave of technological breakthroughs happening right now, some of the topics covered in this article probably feel a little boring. Designers using sophisticated technology to create ordinary buildings in the fat middle probably aren't as interesting as Facebook trying to create an augmented reality metaverse. And companies adopting a vertical approach to CAD software aren't as exciting as their work on AI and machine learning. But I find these developments interesting because they represent major shifts in how designers use CAD, and they're happening almost independent of what's going on technologically. And for a nerd that goes to CAD conferences, I find that a bit exciting.

Footnotes

1:Alternatives considered: the thicc middle of architecture, the architectural beer belly.

2:To give you an idea of how long this article has taken to write, I've since gotten married.

3:Technically, Autodesk didn't create Dynamo. But the point still stands. It often makes more sense for them to create something like Dynamo or to support it than build a specific feature.

4:I'm fairly sure Phil created the sawtooth diagram but let me know if I'm wrong about its provenance.

5:Interestingly, there isn’t an underlying platform that all these companies are using. So it’s not like someone created Rhino for the web and all these companies were built on top of it. Instead, the underlying advancement seems to be simply that it’s become easier to develop software in general, particularly for the web. Obviously, companies like Hypar are vying to become that platform, and it feels like a big opportunity.

Thank you to the audiences at the co.de.D and Connect conferences who heard early drafts of this article and provided a lot of great feedback.

DL

anyone else noticed they use Rhino more like Revit & Revit more like Rhino?